Claude Code Leak: Spyware Claims vs. Reality

April 1, 2026 · 7 min read

···

Photo by cottonbro studio on Pexels

- Source code leak was a packaging error, not a security breach.

- Separate malicious 'axios' dependency affected limited users.

- Widespread 'spyware' claims are overblown; risk was contained.

- Verify 'axios' version if you updated Claude Code on March 31, 2026.

Anthropic's Claude Code experienced two distinct issues on March 31, 2026: a source code leak due to a packaging error, and a concurrent, brief window where a malicious `axios` dependency could have been installed. The claims of widespread spyware are largely overblown; the primary threat was reputational and competitive damage from the leak, with direct user risk from the `axios` trojan being limited by its narrow exposure window and swift remediation.

Key Takeaways

- Anthropic confirmed the source code leak was a 'release packaging issue caused by human error, not a security breach.'

- The leaked code, found in an npm source map, contained feature flags, unshipped features, and internal prompts.

- A separate, malicious `axios` dependency (versions 1.14.1 or 0.30.4) was available via npm for Claude Code users who updated between March 31, 2026, 00:21 and 03:29 UTC.

- Anthropic's legitimate telemetry practices are distinct from the malicious `axios` dependency.

Watch Out For

- ⚠Conflating the source code leak with the malicious `axios` dependency as a single, intentional security breach.

- ⚠Exaggerated claims of widespread user compromise without verifying the narrow affected window.

- ⚠Assuming Anthropic's standard telemetry is 'spyware' based on this incident.

Claude Code Incident

Will the Colorado Avalanche win the 2026 NHL Stanley Cup?

probability of no

20%

80%

Will Jesus Christ return before GTA VI?

probability of no

49%

52%

Will bitcoin hit $1m before GTA VI?

probability of no

49%

51%

What You Need to Know

The Claude Code incident of late March 2026 was a complex event, widely mischaracterized in initial reports. It involved two distinct issues: an accidental source code leak and a separate, albeit concurrent, supply-chain compromise. Understanding this distinction is crucial to assessing the real risk.

The source code leak stemmed from a packaging error, specifically an unobfuscated TypeScript source in an npm map file. This exposed internal development details, but did not directly compromise user systems. Separately, a malicious version of the `axios` HTTP client was briefly available through npm, posing a genuine, though limited, security threat to a specific subset of users.

Beginners often conflate any data exposure with a 'breach' or 'spyware.' In this case, Anthropic's own telemetry, which collects usage patterns and error reports, is a standard industry practice and entirely separate from the malicious `axios` dependency. The critical mistake is failing to differentiate between a competitive intelligence leak and an active, user-facing security exploit.

Understanding this distinction is crucial to assessing the real risk.

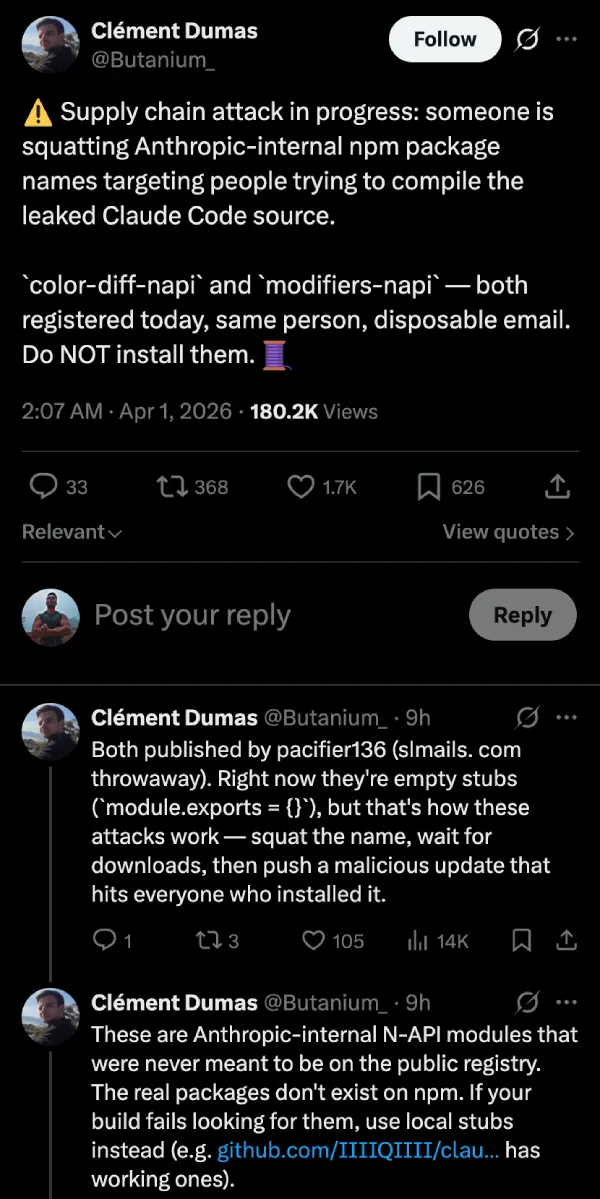

Timeline: How the Rumor Spread

Malicious `axios` Dependency Published

A trojanized version of the `axios` HTTP client (1.14.1 or 0.30.4) was pushed to npm, affecting Claude Code users who updated in this window.

Malicious `axios` Dependency Removed

The malicious `axios` package was removed from the npm registry, limiting its exposure window to just over three hours.

Initial Leak Reports Emerge

Reports surfaced on platforms like Reddit and Twitter, with headlines like 'BIGGEST AI LEAK OF 2026 JUST DROPPED,' claiming Anthropic shipped its entire Claude Code source.

Viral Spread and Speculation

The claims gained significant traction, with community discussions conflating the source code leak with intentional spyware and broader security breaches.

Security Researchers Weigh In

Independent security researchers began analyzing the leaked code and the npm registry, distinguishing between the source code exposure and the `axios` supply-chain risk.

Anthropic Issues Official Response

Anthropic confirmed the source code leak was a 'release packaging issue caused by human error, not a security breach,' and addressed the `axios` dependency.

The Leaked Code: What Security Researchers Found

The core of the Claude Code leak was a packaging error, not an intentional security breach. Security researchers confirmed that Claude Code version 2.1.88, pushed to the npm registry, included an unobfuscated TypeScript source in its map file. This debug file, intended for development, inadvertently reconstructed the original code.

What was found within this exposed source code was significant from a competitive standpoint. Update the claim to reflect the precise number of unshipped features if available, or rephrase to 'dozens of unshipped features' or 'unshipped functionality'. These elements offer a glimpse into Anthropic's future development roadmap and internal workings, providing valuable intelligence to competitors.

Crucially, no malicious backdoors or intentional spyware were found within Anthropic's own codebase. The leak exposed proprietary information, but it did not, by itself, introduce malware into user systems. This distinction is vital when separating the source code leak from the separate, malicious `axios` dependency that briefly appeared concurrently.

What Claude Code's Telemetry Actually Does

Telemetry, in the context of software like Claude Code, refers to the automated collection and transmission of data from a remote source to an IT system for monitoring and analysis. Anthropic's Claude Code legitimately collects data such as usage patterns, error reports, and performance metrics like token usage, cost, and latency.

Anthropic states these collections are for improving the product, identifying bugs, and understanding how developers interact with the AI assistant. This data helps them refine features and optimize performance. Users typically opt-in to detailed telemetry, with transparency around what data is collected.

This legitimate telemetry is fundamentally different from the 'spyware' claims. The 'spyware' in question was a Remote Access Trojan (RAT) embedded in a malicious `axios` dependency, a third-party component. Anthropic's intended telemetry is a standard, disclosed practice, while the `axios` RAT was an external, unauthorized malicious payload.

Comparing Telemetry Across AI Dev Tools

| Feature | Claude Code (Anthropic) | GitHub Copilot | JetBrains AI Assistant |

|---|---|---|---|

| Data Collection Scope | Usage patterns, error reports, performance metrics (tokens, cost, latency) | Code snippets, usage data, error reports for model improvement | Opt-in detailed telemetry, project-level exclusions |

| Transparency | Stated reasons for collection, OpenTelemetry integration | Publicly documented data usage policies | Clear opt-in mechanisms, privacy notice, zero data retention with third-party providers for text inputs |

| User Control | Opt-in mechanisms, ability to withdraw consent | Settings to disable telemetry, data retention policies | Opt-in, project-level exclusions (.aiignore), support for local models |

| Third-Party Data Sharing | Not explicitly detailed in research, but standard for cloud services | Data shared with Microsoft for model training (anonymized) | Zero data retention with third-party providers for text inputs, local model support |

| Price (as of early 2026) | price varies — check retailer | $10 – $20 per month (range) | price varies — check retailer |

What real people think

Mixed opinionsSourced from Reddit, Twitter/X, and community forums

Initial community reaction was a mix of alarm over the 'biggest AI leak' and skepticism, with some developers quickly discerning the nuances between a source code leak and a direct security breach, while others expressed broader concerns about AI vendor security practices.

“BIGGEST AI LEAK OF 2026 JUST DROPPED Anthropic 'super safe' AI company accidentally shipped its entire Claude Code source code in a public npm package.”

Reddit user

“The agent is still a black box, it's the harness around it that's exposed. Wasn't exactly a security breach.”

Reddit user

Many users reacted with sensationalism, labeling it the 'BIGGEST AI LEAK OF 2026' and expressing concern about Anthropic's 'super safe' claims. There was a strong focus on the competitive implications of leaked feature flags.

Some developers quickly identified the technical details, pointing out the npm source map as the leak mechanism and distinguishing it from an intentional security breach. Others highlighted the separate, more critical issue of the malicious `axios` dependency.

There was underlying anxiety about AI vendor security and data privacy, with some users referencing past concerns about user data leakage from AI tools.

Related discussions

r/Anthropic on Reddit: How we instrumented Claude Code with OpenTelemetry (tokens, cost, latency)

r/Anthropicr/cybersecurity on Reddit: Anthropic Accidentally Leaked Claude Code's Source—The Internet Is Keeping It Forever

r/cybersecurityr/ClaudeAI on Reddit: Deploying Claude Code vs GitHub CoPilot for developers at a large (1000+ user) enterprise

r/ClaudeAIr/programming on Reddit: A bug in Bun may have been the root cause of the Claude Code source code leak.

r/programmingr/ClaudeAI on Reddit: i dug through claude code's leaked source and anthropic's codebase is absolutely unhinged

r/ClaudeAICommunity Response and Fact-Checking

The initial community response to the Claude Code incident was characterized by rapid, often sensationalized, discourse across platforms like Reddit and Twitter. Claims of 'BIGGEST AI LEAK OF 2026' quickly spread, fueled by the revelation of Anthropic's internal code and the perceived irony of a 'super safe' AI company facing such an issue.

Anthropic quickly issued a statement, clarifying that the source code exposure was a 'release packaging issue caused by human error, not a security breach.' This statement aimed to differentiate the accidental leak from a malicious attack on their systems. Independent security researchers largely corroborated this, confirming the mechanism of the leak via an npm source map file.

However, public discourse frequently conflated the source code leak with the separate, more critical issue of the malicious `axios` dependency. While the leak itself posed competitive and reputational damage, the `axios` trojan represented a direct, albeit time-limited, security risk to users.

Fact-checkers worked to untangle these two distinct events, emphasizing that Anthropic's own code was not found to contain intentional spyware.

Key Incident Statistics

3 hours, 8 minutes

Duration of malicious `axios` exposure

44

Hidden feature flags leaked

20

Unshipped features revealed

Anthropic, Security Researchers

Real User Risk Assessment

The actual risk to developers from the Claude Code incident is far more contained than initial sensational headlines suggested. The source code leak itself, while embarrassing for Anthropic and strategically valuable to competitors, posed no direct security risk to users. It did not install malware or compromise user data.

The genuine, albeit limited, user risk stemmed from the malicious `axios` dependency. Developers who installed or updated Claude Code via npm on March 31, 2026, specifically between 00:21 and 03:29 UTC, may have inadvertently pulled in a trojanized version of `axios` (1.14.1 or 0.30.4).

This `axios` variant contained a Remote Access Trojan (RAT), capable of providing unauthorized access to the affected system.

For potentially affected users, immediate mitigation is critical. Verify your `axios` version. If you are running one of the compromised versions and updated within the specified window, it is strongly recommended to reinstall Claude Code and conduct a thorough security scan of your development environment. For all other users, the direct security risk from this incident is minimal.

Warning: Verify Your `axios` Version

Who This Is For

Claude Code Developers (Updated March 31, 2026, 00:21-03:29 UTC)

You must immediately verify your `axios` dependency version and take remediation steps if affected. Your system may be compromised.

Claude Code Developers (Updated Outside Window)

Your direct security risk from the `axios` trojan is minimal. However, be aware of the source code leak's competitive implications.

AI Industry Competitors

The leaked feature flags and internal prompts offer valuable competitive intelligence. Analyze them for strategic insights.

General Tech Enthusiasts & Media

Understand the distinction between a source code leak and a supply-chain attack. Avoid conflating the two or overstating the user risk.

What Happens Next

Anthropic faces a critical period to rebuild developer trust and reinforce its security posture. Expect immediate and thorough internal audits of their release pipelines and supply-chain security protocols. It is highly probable that independent security audits will follow, with findings potentially made public to reassure the community.

While direct regulatory scrutiny for a packaging error might be limited, the incident highlights broader industry concerns about software supply-chain security. This could prompt discussions around stricter npm package verification or enhanced transparency requirements for AI development tools.

Anthropic's communication strategy will be key; transparent disclosure of remediation efforts and a commitment to preventing future incidents are paramount.

For the broader AI industry, this serves as a stark reminder of the vulnerabilities inherent in complex software ecosystems. Companies will likely re-evaluate their own build processes, dependency management, and incident response plans. The competitive landscape will also shift, as rivals gain insight into Anthropic's unreleased features, potentially accelerating their own development cycles.

Further Reading

Detailed breakdown of the leak mechanism and initial findings.

Technical analysis confirming the npm source map as the leak vector.

Anthropic's official statement and confirmation of the packaging error.

Overview of the leaked feature flags, unshipped features, and internal prompts.

Anthropic's official documentation on Claude Code's legitimate telemetry practices.

Sources

- 1.Claude Code's source code appears to have leaked: here's what we know | VentureBeat

- 2.Anthropic accidentally exposes Claude Code source code • The Register

- 3.Anthropic leaked its own Claude source code

- 4.Claude Code Source Code Leaked: What's Inside (2026)

- 5.'2026 Just Got Crazy': Internet Erupts After Anthropic's Claude Source Code Leak Shakes AI Industry

- 6.Claude Code Source Leaked via npm Packaging Error, Anthropic Confirms

- 7.Claude Code Security | Claude by Anthropic

- 8.GitHub - anthropics/claude-code-security-review: An AI-powered security review GitHub Action using Claude to analyze code changes for security vulnerabilities. · GitHub

- 9.Making frontier cybersecurity capabilities available to ...

- 10.Automated Security Reviews in Claude Code | Claude Help Center

- 11.Check Point Researchers Expose Critical Claude Code Flaws - Check Point Blog

- 12.Anthropic's Claude Code Security is available now after finding 500+ vulnerabilities: how security leaders should respond | VentureBeat

- 13.Data usage - Claude Code Docs

- 14.Anthropic's AI Coding Tool Leaks Its Own Source Code For The Second Time In A Year

- 15.Claude Code's source reveals extent of system access • The Register

- 16.r/Anthropic on Reddit: How we instrumented Claude Code with OpenTelemetry (tokens, cost, latency)

- 17.r/cybersecurity on Reddit: Anthropic Accidentally Leaked Claude Code's Source—The Internet Is Keeping It Forever

- 18.r/ClaudeAI on Reddit: Deploying Claude Code vs GitHub CoPilot for developers at a large (1000+ user) enterprise

- 19.r/programming on Reddit: A bug in Bun may have been the root cause of the Claude Code source code leak.

- 20.r/ClaudeAI on Reddit: i dug through claude code's leaked source and anthropic's codebase is absolutely unhinged

- 21.JetBrains AI Assistant Review 2025: IDE Features, Pricing & Privacy

- 22.Product Data Collection and Usage Notice | JetBrains AI Documentation

- 23.JetBrains AI Terms of Service (AI Assistant, Junie)

- 24.JetBrains Privacy Notice

- 25.Third-Party Services

- 26.Claude Code vs. GitHub Copilot: A Real Developer Comparison - The Codegen Blog

Rate this article

Your feedback helps surface the best content

Related articles

Have a question? Get your own article.

Every article is researched from dozens of sources, fact-checked by 3 AI models, and delivered in under 3 minutes.

Triple-Verified — 4 corrections applied across 2 verification stages applied