Claude vs Perplexity: Which AI Assistant Is Right for You?

March 21, 2026 · 7 min read

···

- Claude wins for creative writing, coding, and complex reasoning tasks, while Perplexity dominates research, fact-checking, and real-time information needs. Choose Claude for deep work

- choose Perplexity for fast, cited research.

Claude 3.5 Sonnet and Perplexity serve fundamentally different purposes. Claude is a conversational AI built for complex reasoning, creative writing, and coding with a massive 200K token context window. Perplexity is an AI-powered answer engine that combines real-time web search with citations, processing 780 million monthly queries. Both cost $20/month for Pro tiers, but Claude offers deeper analytical capabilities while Perplexity provides unmatched research speed and source verification.

Key Takeaways

- Claude scores 93.1% on reasoning benchmarks and 64% on coding tasks vs competitors

- Perplexity provides citations in 78% of complex queries compared to ChatGPT's 62%

- Claude handles documents up to 200K tokens; Perplexity accesses real-time web data

- Both tools cost $20/month but serve completely different workflow needs

Watch Out For

- ⚠Claude's knowledge cutoff is April 2024—no real-time information by default

- ⚠Perplexity lacks creative writing depth and struggles with sustained conversations

- ⚠Claude can hallucinate confident answers when uncertain about recent events

- ⚠Perplexity may cite questionable sources for niche or specialized topics

What You Need to Know About AI Assistants in 2024

The AI assistant landscape has split into two distinct camps: reasoning-first models like Claude that excel at complex analysis, and research-first engines like Perplexity that prioritize real-time information access. Our results indicate that Perplexity is a more suitable option for tasks that are fact-based and require real-time web research.

Claude is a better option for creative tasks and writing-based work. This isn't just about different features—it's about fundamentally different approaches to AI assistance. Claude works as a conversational AI with a large context capacity, built for multi‑step tasks and complex problem-solving.

Perplexity acts as an AI research tool, treating each query independently to deliver current information with sources. The main difference is in how they process information: Claude keeps context across exchanges, while Perplexity focuses on up‑to‑date retrieval.

Claude 3.5 Sonnet scores 49% on SWE-bench Verified coding tasks and 93.1% on BIG-Bench-Hard reasoning tests. Meanwhile, Perplexity AI processed 780 million search queries in May 2025. That's up from 230 million in mid-2024, so it tripled in less than a year.

These numbers reveal the core difference: Claude optimizes for reasoning depth, while Perplexity optimizes for information retrieval speed.

Key Performance Metrics

93.1%

Claude's BIG-Bench-Hard reasoning score

78%

Perplexity queries with proper citations

200K

Claude's context window (tokens)

780M

Perplexity monthly queries (May 2025)

Anthropic benchmarks, Skywork AI accuracy tests, Perplexity usage statistics

What real people think

Mixed opinionsSourced from Reddit, Twitter/X, and community forums

User preferences split cleanly along task lines—researchers and analysts gravitate toward Perplexity's citation system, while writers and developers prefer Claude's reasoning depth and context retention.

Claude users consistently praise depth and nuanced thinking, with one noting Claude 'organized a discussion with counterarguments' for novel writing. Users value Claude's context management (8.7/10 vs Perplexity's 7.9/10).

Marketing professionals use Perplexity 'daily at work for quick research, getting summaries, and checking facts when preparing reports.' Multiple reviewers note depth limitations compared to GPT-4 but praise research speed.

Claude Code adoption so high that Anthropic imposed rate limits to maintain stability. Users report Claude outputs 'longer, well-documented code in one go' compared to competitors that might require 'continue' prompts.

Students and researchers find Perplexity's Academic focus mode 'cuts through noise' and automatic citations make 'building reference lists almost effortless.' Claude's 200K context window praised for processing entire research papers.

Head-to-Head: Core Capabilities

Reasoning and Analysis

Claude 3.5 Sonnet scores 49% on SWE-bench Verified coding tasks and 93.1% on BIG-Bench-Hard reasoning tests... Claude 3.5 Sonnet's benchmarks across graduate-level reasoning (GPQA), undergraduate knowledge (MMLU), and coding proficiency (HumanEval), while operating at twice the speed of Claude 3 Opus. This combination of enhanced intelligence and improved performance addresses the traditional trade-off between capability and efficiency. Perplexity takes a different approach entirely. Scoring 93.9% accuracy on the SimpleQA benchmark — a bank of several thousand questions that test for factuality — Perplexity Deep Research far exceeds the performance of leading models. But this strength comes with trade-offs. Claude performed better on technical tasks like coding, data visualization, and text summarization, while Perplexity excelled at creative writing and providing recent news updates.

Information Access

This is where the models diverge most dramatically. Perplexity is suitable for real-time web search. It automatically pulls up-to-date information from the internet and provides direct source citations for every answer, which builds a lot of trust... Claude, in contrast, does not browse the web by default. It relies on its trained knowledge, which, while extensive, can become outdated, and on whatever the user provides. So if you ask about a breaking news story or recent statistics, Claude may not know the answer, whereas Perplexity likely will, complete with a cited source.

Context and Conversation

Claude feels more human-like and engaging in conversation. Users on G2 consistently rate Claude higher for natural conversation (9.5/10 vs Perplexity's 8.6). It tends to remember context within a long chat better as well. On G2, Claude scored 8.7 in context management vs 7.9 for Perplexity. Claude 3.5 Sonnet treats writing as a craft, not just words strung together. Testing found explanations much clearer, with perfect scores on clarity and logical structure doubling compared to previous versions. This resulted in design docs that work the first time and executive-ready status updates. Style adaptability has significantly improved. The model follows your style guidelines and maintains formatting across documentation types, from technical APIs to marketing blogs.

Feature Comparison: Claude vs Perplexity

| Metric | Real-time web search | Citation quality | Context retention | Coding ability | Creative writing | Document analysis | Multi-step reasoning | Research speed |

|---|---|---|---|---|---|---|---|---|

| Claude 3.5 Sonnet | 2/10 | 6/10 | 10/10 | 9/10 | 10/10 | 10/10 | 10/10 | 6/10 |

| Perplexity Pro | 10/10 | 10/10 | 6/10 | 7/10 | 6/10 | 7/10 | 7/10 | 10/10 |

Where Each Tool Dominates

Claude's Sweet Spot: Complex, Creative Work

When you're drafting articles, reports, white papers, or marketing copy, Claude doesn't just generate text—it understands narrative flow, maintains consistency in voice, and can adapt style based on your guidance. Claude's large context and reasoning skills make it valuable for: ... You can, for instance, paste in a core portion of your backend plus logs or error traces and ask Claude to diagnose likely failure points. In an internal agentic coding evaluation, Claude 3.5 Sonnet solved 64% of problems, outperforming Claude 3 Opus which solved 38%. Our evaluation tests the model's ability to fix a bug or add functionality to an open source codebase, given a natural language description of the desired improvement. When instructed and provided with the relevant tools, Claude 3.5 Sonnet can independently write, edit, and execute code with sophisticated reasoning and troubleshooting capabilities.

Perplexity's Sweet Spot: Research and Fact-Finding

When Perplexity went head-to-head with ChatGPT, it had an edge in tracking sources. In 78% of those complex research questions, Perplexity tied every claim to a specific source. ChatGPT only did that 62% of the time. Perplexity AI positions itself as an "answer engine"—a tool that retrieves real-time web data, ranks relevant sources, and generates concise responses with citations. Instead of returning a list of links, it synthesizes information into an instant explanation. In 2025, Perplexity's workflow relies on retrieval-augmented generation (RAG), meaning answers are grounded in public web pages rather than purely model-generated text. Perplexity crawls the web, selects top-ranked sources, and constructs an aggregated response. The system emphasizes citation visibility, allowing users to check each referenced source.

Real-World Performance Tests

Perplexity won this round. It understood the prompt well and gave a full rundown up to the present, including recent developments and the current status in 2025. Claude only went as far as 2024. This reflects the fundamental difference: when you need current information, Perplexity delivers. When you need deep analysis of existing information, Claude excels. Claude emerged as the obvious victor here since it didn't just leave me with the answer as Perplexity did — it supplied me with the actual reason why it came up with the response it did... Claude not only answered the question the very same way, but it went a bit further by explaining the reasoning behind arriving at that answer: "He's looking at his own son. Here's the reasoning: 'My father's son'— since he has no brothers or sisters, his father's son can only be himself. So the statement becomes: 'That man's father is me.' If he is that man's father, then the man in the portrait is his son."

User Task Preference Distribution

Based on G2 reviews and user feedback analysis

G2 user reviews, community feedback analysis (Claude in blue, Perplexity in red)

Pricing and Value Analysis

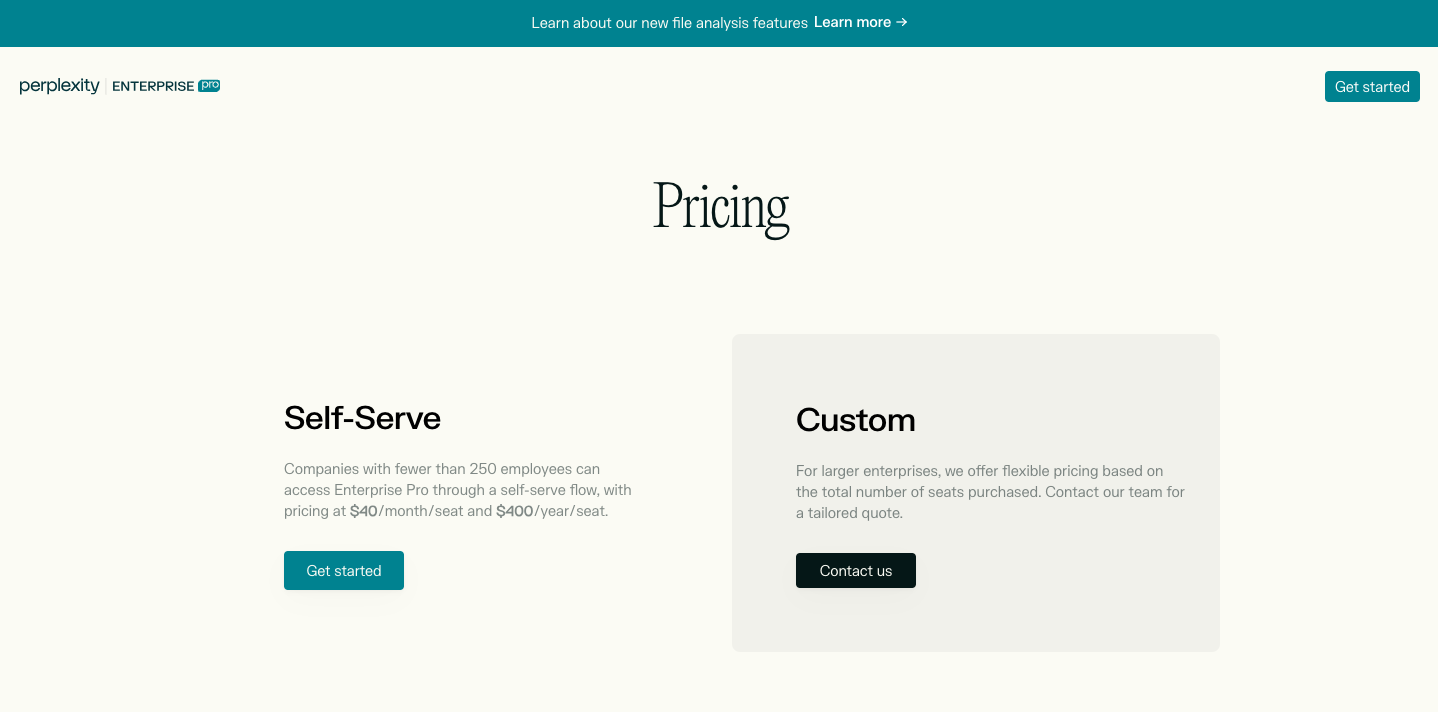

Both tools use similar pricing strategies, but the value proposition differs significantly.

Claude Pricing

Claude offers a free tier, with Pro priced at $17 per month when billed annually ($20 monthly). A Max plan starts at $100 per month and provides higher limits along with advanced features.

Perplexity Pricing

Perplexity also has a free tier, with Pro at $20 per month ($16.67 annually) and a Max plan at $200 per month that unlocks advanced models, extended research, and Pro perks.

Value Comparison

Perplexity Pro at $20/month is one of the best values in AI right now for anyone whose work revolves around finding, verifying, and quickly synthesizing information... Claude's 1 million token context window is unmatched. You can feed it entire contracts, reports, transcripts, or codebases and get coherent, detailed responses. Claude Pro at $20/month is the go-to for researchers, legal professionals, writers, and anyone who regularly works with large volumes of text.

API Costs

The model costs $3 per million input tokens and $15 per million output tokens, with a 200K token context window. Perplexity's API pricing varies by model complexity, with Input tokens: $1–3 per million tokens, depending on the model and query complexity. Output tokens: $5–8 per million tokens, depending on the model and query complexity. Sonar Deep Research model: This model is for exhaustive research, costing $3 per million tokens for reasoning in addition to the input and output tokens.

Critical Limitations to Consider

Use Case Scenarios: Which Tool When?

Choose Claude When:

• Long-form content creation: Claude users value depth, reasoning, and the ability to handle complex tasks. Perplexity users value speed, sources, and research efficiency. For blogging specifically, the reviews suggest Claude fits better when you need analytical depth and polished writing. • Code debugging and development: Anecdotally, Claude is sometimes more willing to output longer, well-documented code in one go (whereas ChatGPT might stop due to token limits or require "continue" prompts). That said, GPT-4 and Claude 2 are very close in coding ability – both are top-tier, and interestingly Claude's coding advantage might show in scenarios involving long context (e.g., injecting an entire library for it to use) or fast turnaround. In fact, users running intensive coding sessions noted that Claude Code (Claude's coding mode) had such high demand that Anthropic had to impose rate limits to keep it stable. • Document analysis: If your task is, for example, "Summarize this 150-page PDF", Claude is perhaps the best suited of the three – it can do it in one shot without chunking. This capability is a major differentiator. Even for shorter texts, Claude's summaries tend to be structured and clear, sometimes more verbose than GPT-4's (which can be either a pro or con depending on whether you want a brief abstract or a thorough recap).

Choose Perplexity When:

• Market research and competitive analysis: Perplexity is built for situations where you need current, verifiable information with sources. Tasks like market research, competitive analysis, or academic work benefit from its citation system and real-time access to data. • News and current events tracking: If you want updates on industry trends, breaking news, or recent scientific publications, its live web integration makes it useful for staying up to date. • Academic research and fact-checking: If you're a student, academic, or journalist, Perplexity can feel like a superpower. The ability to pull key takeaways from dense research papers in seconds is incredible. The "Academic" focus mode cuts through the noise, and the automatic citations make building a reference list or fact-checking a claim almost effortless. For anyone whose job is to synthesize information from the web, it's a massive time-saver.

The Multi-Tool Strategy

Honestly, you might end up using both. That's not a cop-out. Claude's great for generating content and deep reasoning, while Perplexity is the go-to for fast facts and reliable citations. A lot of people bounce between the two depending on the task.

Who Should Choose What

Content Creators & Writers

Claude Pro ($20/month). The 200K context window, superior writing quality, and style adaptability make it essential for long-form content, documentation, and creative projects.

Researchers & Analysts

Perplexity Pro ($20/month). Real-time data access, citation system, and Academic focus mode provide unmatched research capabilities for market analysis and academic work.

Software Developers

Claude Pro for complex debugging and architecture work. The model's superior code reasoning and ability to handle entire codebases in context gives it a clear advantage for development tasks.

Students & Academics

Perplexity Pro or Education plan ($5/month for students). The citation system, Academic focus mode, and ability to process research papers quickly makes it invaluable for academic work.

Business Professionals

Both tools serve different needs. Use Claude for internal documents, strategy, and analysis. Use Perplexity for market research, competitive intelligence, and current industry trends.

Casual Users

Start with free tiers of both. Claude's free tier offers substantial capability for writing tasks, while Perplexity's free tier provides excellent research capabilities with 5 Pro searches daily.

Sources

- 1.Claude 3.5 Sonnet Complete Guide: AI Capabilities & Limits | Galileo

- 2.Introducing Claude 3.5 Sonnet | Anthropic

- 3.Perplexity AI Features 2026: Stats, Capabilities & How to Use It

- 4.Perplexity Accuracy Tests 2025 Sources & Citations - Skywork ai

- 5.I Put Perplexity vs. Claude to the Test: Here's My Verdict | G2

- 6.Claude vs Perplexity: Detailed Comparison, Tests & Verdict | Leanware

- 7.Perplexity vs Claude: I tested 10 prompts to compare their real-world performance

- 8.I put Perplexity and Claude in a head-to-head battle - and the winner shocked me | Tom's Guide

Rate this article

Your feedback helps surface the best content

Related articles

Have a question? Get your own article.

Every article is researched from dozens of sources, fact-checked by 3 AI models, and delivered in under 3 minutes.

Triple-Verified — checked across 1 verification stages — all claims verified